MR4MR: Mixed Reality for Melody Reincarnation

There is a long history of efforts to explore musical elements from the entities and spaces around us, such as musique concrète and ambient music. In the context of computer music and digital art, interactive experiences focusing on surrounding objects and physical spaces have also been designed. In recent years, with the development and widespread use of devices, an increasing number of works designed in Extended Reality (XR) have appeared to create such musical experiences.

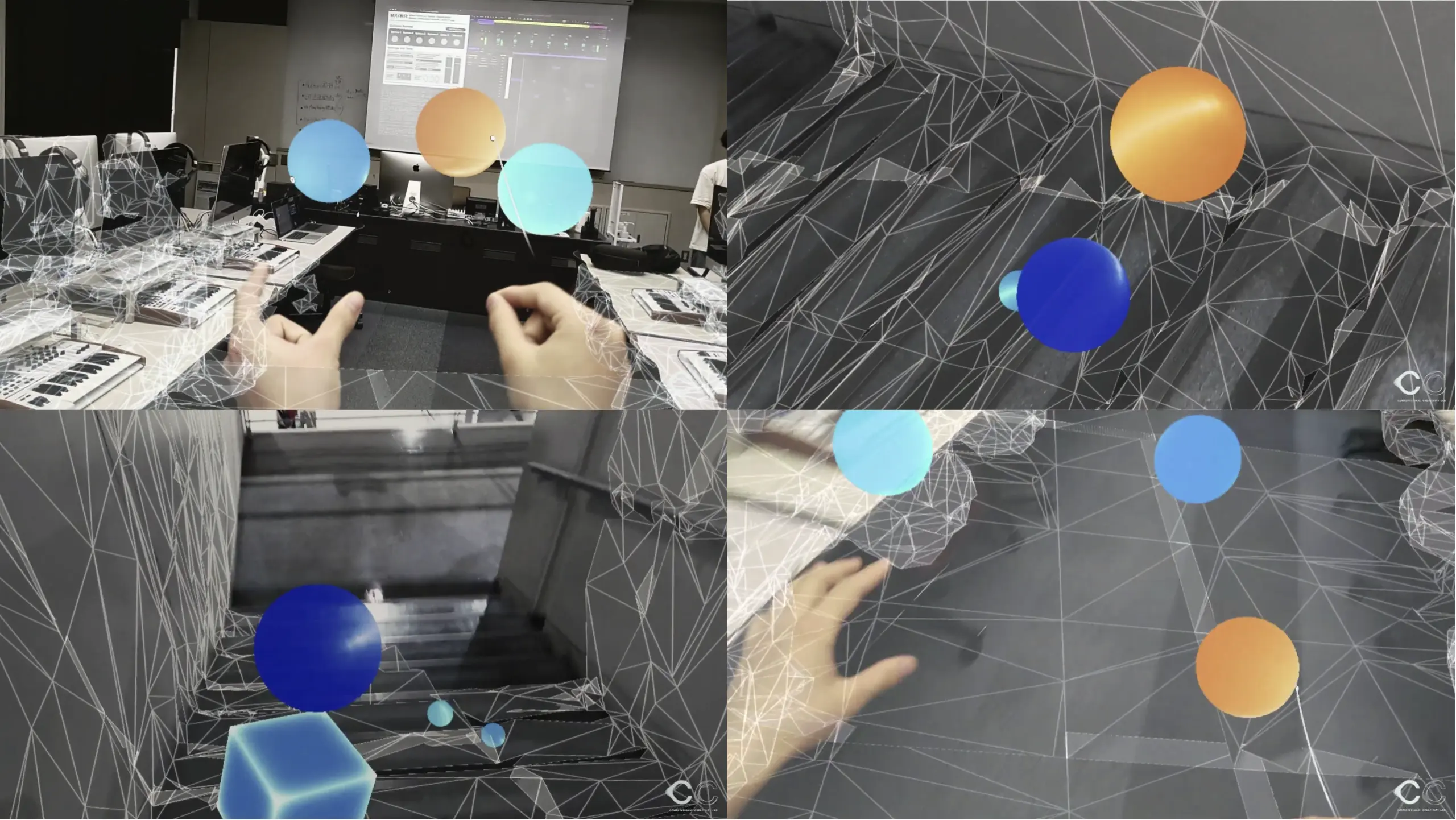

"MR4MR" is a sound installation work where users can experience melodies generated from interactions with their surrounding space.

Using HoloLens, an MR head-mounted display, users can bump virtual objects that emit sound against real objects in their surroundings.

By continuously creating a melody following the sound made by the object and re-generating a randomly and gradually changing melody using music generation machine learning models, users can feel their ambient melody "reincarnating."

Exhibition

This work was exhibited at the NTT InterCommunication Center (ICC).

Development

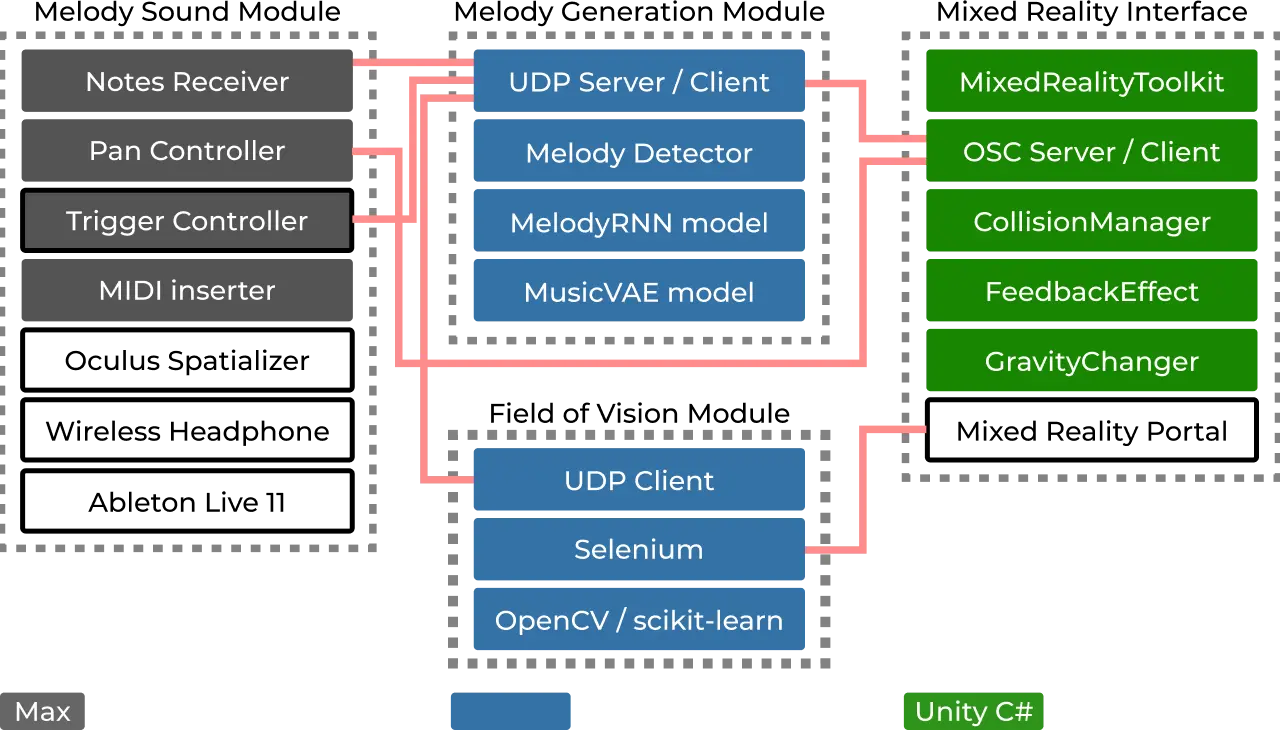

A Max patch embedded in an Ableton Live Max for Live device, a Python process for the melody generation backend, and the application on the HoloLens communicate and perform RPC via Open Sound Control (OSC) within the same network.

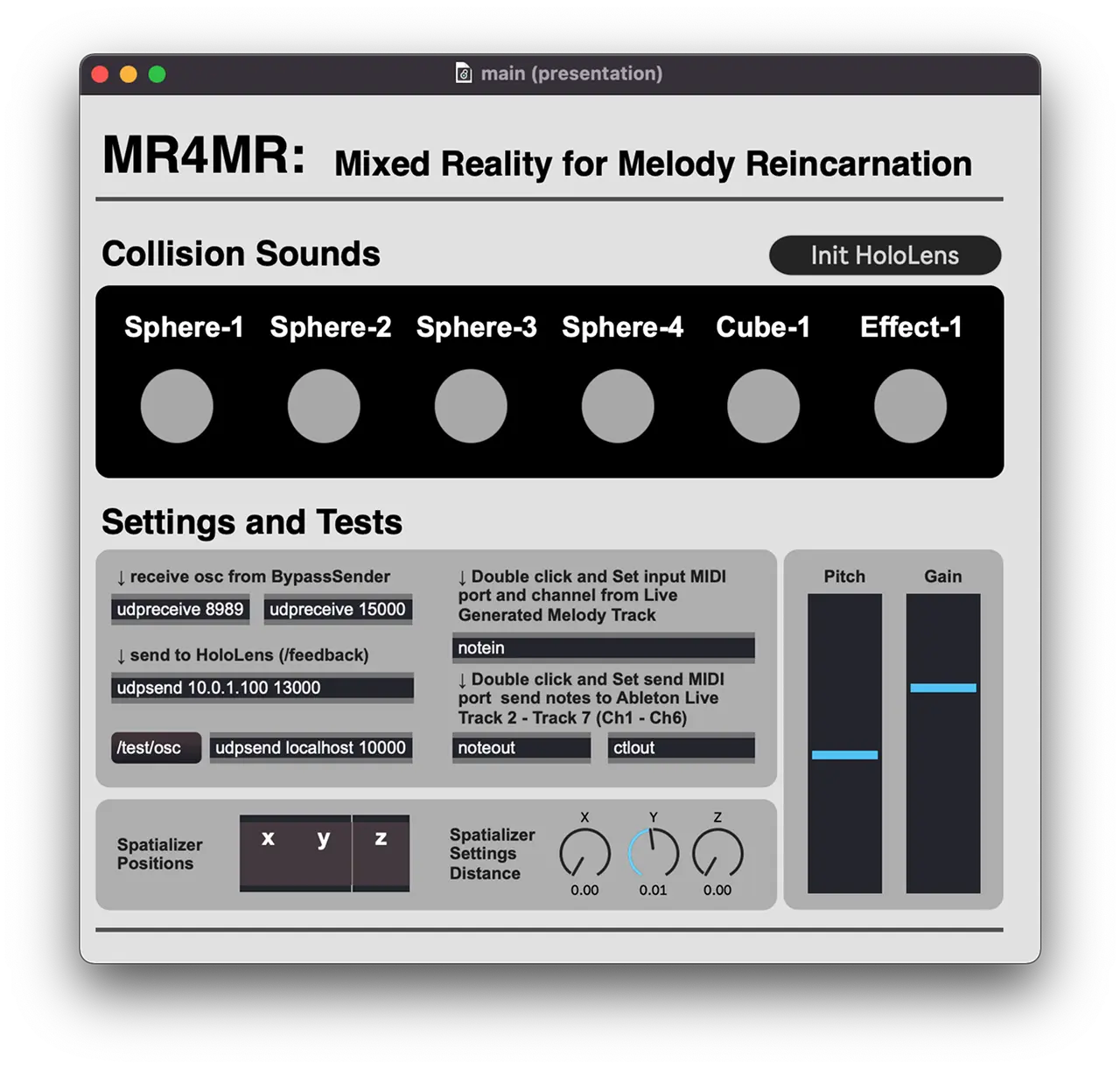

We also developed a user interface for managing the installation.

Publication

This work was presented as a demo paper at AIMC (3rd Conference on AI Music Creativity 2022).

Please use the following for citation:

Kobayashi, Atsuya, Ishino, Ryogo, Nobusue, Ryuku, Inoue, Takumi, Okazaki, Keisuke, Sawa, Shoma, & Tokui, Nao. (2022, September 17). MR4MR: Mixed Reality for Melody Reincarnation. Proceedings of the 3rd Conference on AI Music Creativity. The 3rd Conference on AI Music Creativity (AIMC 2022). https://doi.org/10.5281/zenodo.7088357

Bibtex

@inproceedings{kobayashi_mr4mr2022, title = {MR4MR: Mixed Reality for Melody Reincarnation}, author = { Kobayashi, Atsuya and Ishino, Ryogo and Nobusue, Ryuku and Inoue, Takumi and Okazaki, Keisuke and Sawa, Shoma and Tokui, Nao }, year = 2022, month = {Sep}, booktitle = {Proceedings of the 3rd Conference on AI Music Creativity}, publisher = {AIMC}, doi = {10.5281/zenodo.7088357}, abstractnote = { There is a long history of an effort made to explore musical elements with the entities and spaces around us, such as musique concrète and ambient music. In the context of computer music and digital art, interactive experiences that concentrate on the surrounding objects and physical spaces have also been designed. In recent years, with the development and popularization of devices, an increasing number of works have been designed in Extended Reality to create such musical experiences. In this paper, we describe MR4MR, a sound installation work that allows users to experience melodies produced from interactions with their surrounding space in the context of Mixed Reality (MR). Using HoloLens, an MR head-mounted display, users can bump virtual objects that emit sound against real objects in their surroundings. Then, by continuously creating a melody following the sound made by the object and re-generating randomly and gradually changing melody using music generation machine learning models, users can feel their ambient melody "reincarnating" } }