ExSampling: a system for the real-time ensemble performance of field-recorded environmental sounds

ExSampling is an integrated system of a recording application and Deep Learning environment for real-time music performance using environmental sounds captured through field recording. Automated sound mapping to Ableton Live tracks by Deep Learning enables field recording to be applied to real-time performance, creating interactions among sound recorders, composers, and performers.

The classification is powered by a MobileNetV2-based ESC-50 environmental sound classification model (ml-sound-classifier by @daisukelab), which automatically maps recorded sounds to tracks in Ableton Live (DAW application) via OSC messages.

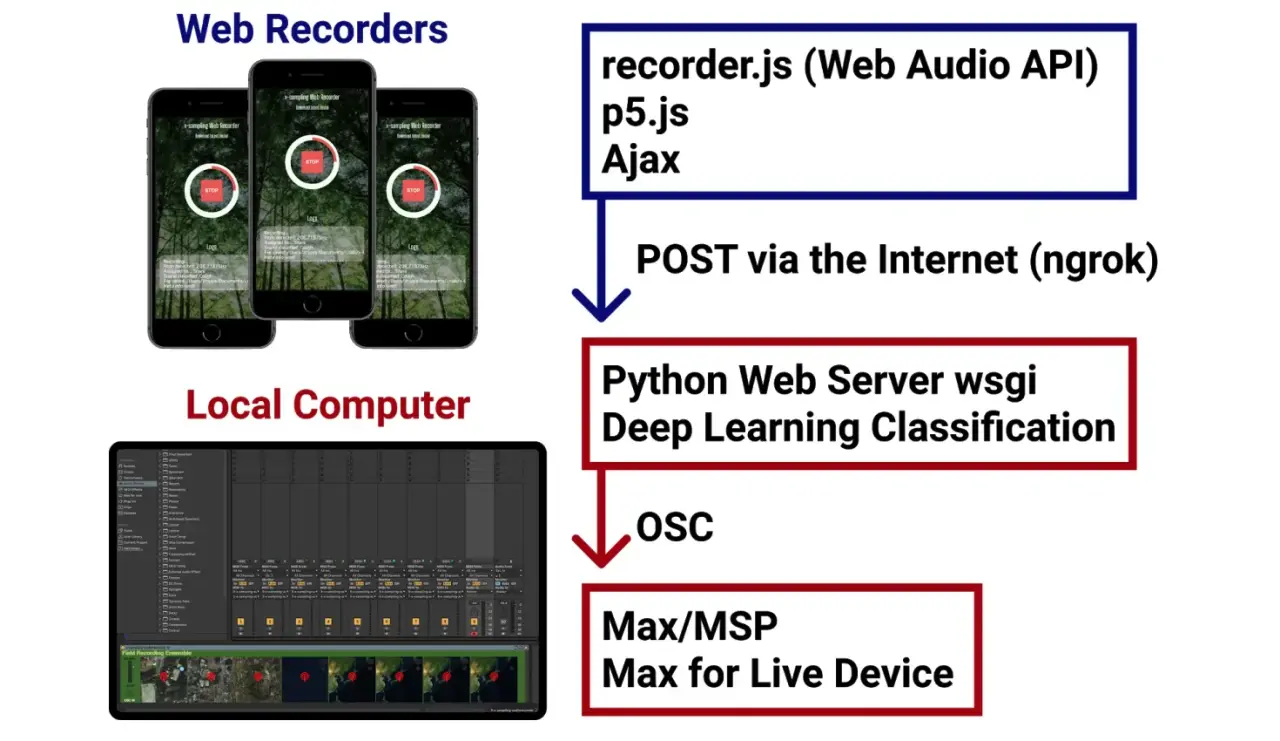

SystemOverview

ExSampling consists of three components:

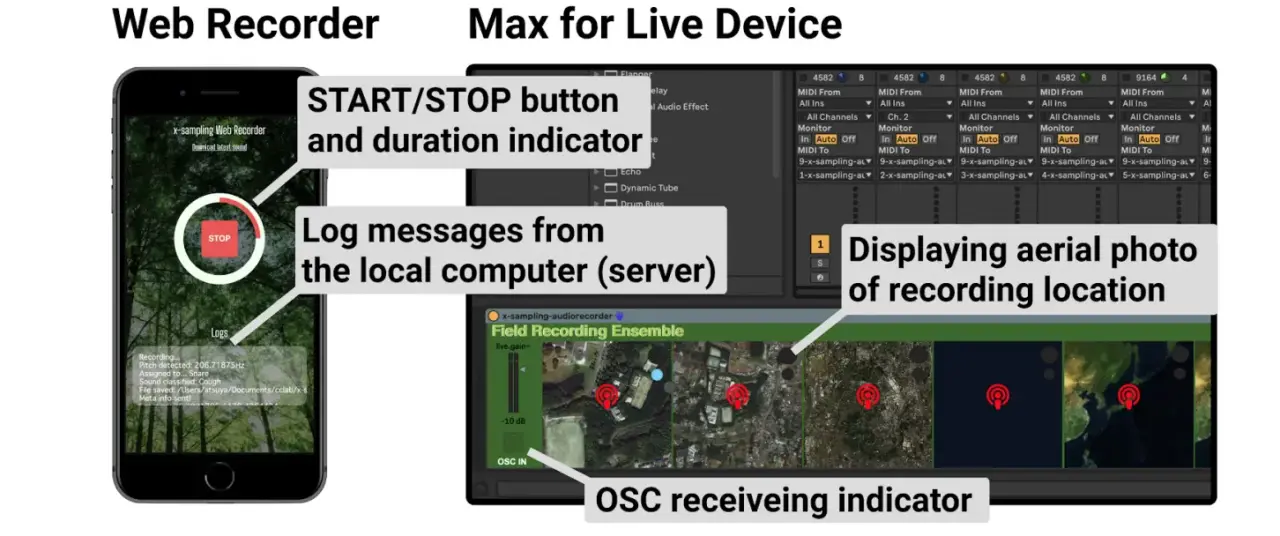

- Web Recording Application (field recorder side): Carried by the field recorder to capture environmental sounds in real time. Recorded audio data is sent over a network to the inference server.

- Python Inference Server (laptop): Runs the Deep Learning model on incoming audio data to classify the environmental sound category. The result is sent as an OSC (Open Sound Control) message to Ableton Live.

- Ableton Live (performer side): Receives OSC messages via Max for Live and swaps the sound source assigned to the corresponding track. Performers use the continuously incoming samples to play an ensemble performance in real time.

The system incorporates location data into the mechanism by which sounds captured outdoors are reflected — within a few seconds of latency — in the sampler sources used on stage. On the Ableton Live interface, an aerial photograph of the surroundings is displayed, allowing performers to listen to the incoming sounds or look at the landscape to understand what has been recorded.

This architecture creates an organic interaction between the field recorder and the on-stage performers.

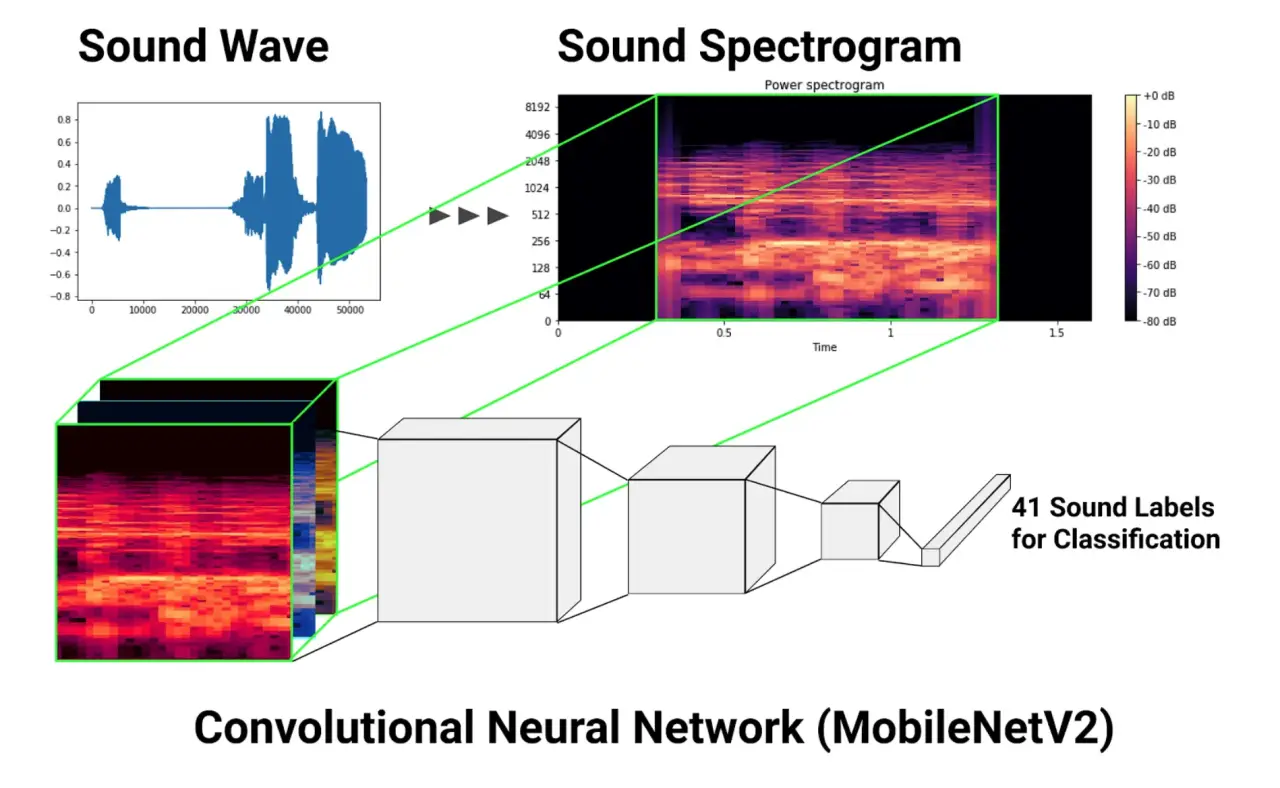

ClassificationArchitecture

The classification model is based on ml-sound-classifier by daisukelab, using a MobileNetV2 backbone.

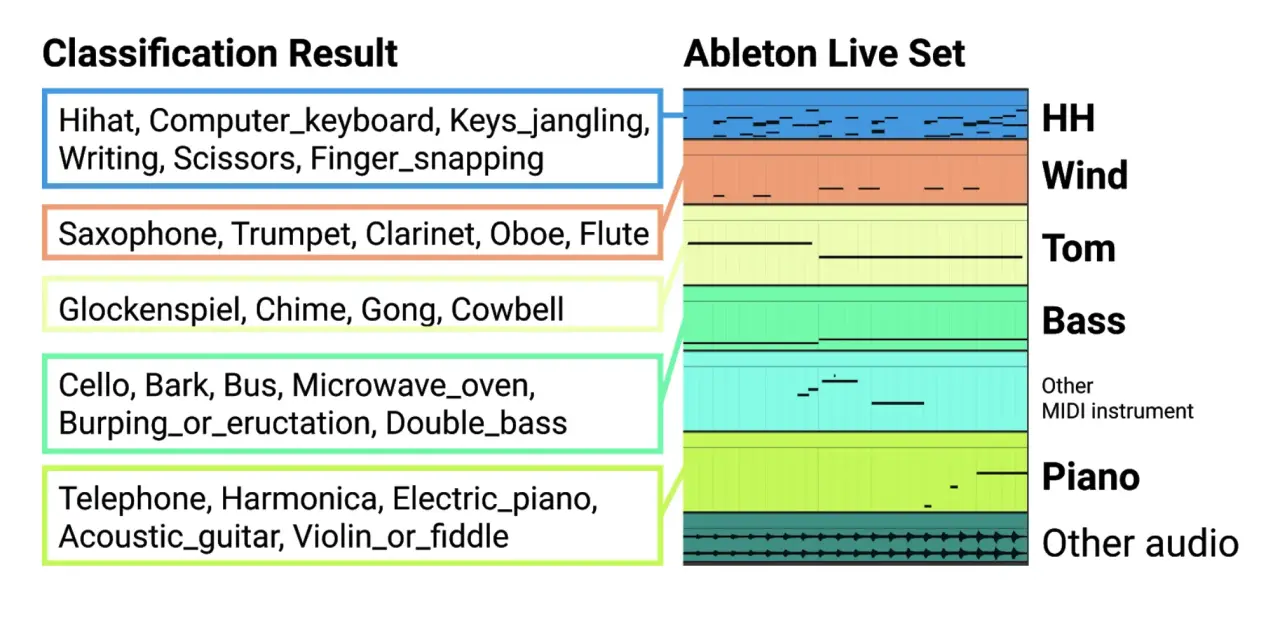

MIDITrackMapping

The Python inference server sends OSC messages to Ableton Live, which automatically triggers clips based on the classified category.

The detailed flow is as follows:

- The predicted category (e.g.

bird,rain,sea_waves) is converted to a category ID (integer) - The ID is attached to the OSC address

/exsampling/categoryand transmitted - The Max for Live device in Ableton Live receives the OSC message and triggers the corresponding MIDI note

- Each MIDI track has a pre-loaded field-recorded environmental sound clip (sampler instrument)

- The triggered clip is played as performance audio, with the performer controlling volume and effects in real time

Rather than mapping all 50 ESC-50 categories directly one-to-one to Ableton Live tracks, a subset of categories relevant to the performance context is selected when composing tracks. This allows performers to design the musical structure with prior knowledge of what kinds of sounds to expect.

BibTex

@inproceedings{kobayashi_NIME20_58, author = {Kobayashi, Atsuya and Anzai, Reo and Tokui, Nao}, title = {ExSampling: a system for the real-time ensemble performance of field-recorded environmental sounds}, pages = {305--308}, booktitle = {Proceedings of the International Conference on New Interfaces for Musical Expression}, editor = {Michon, Romain and Schroeder, Franziska}, year = {2020}, month = jul, publisher = {Birmingham City University}, address = {Birmingham, UK}, issn = {2220-4806}, doi = {10.5281/zenodo.4813371}, url = {https://www.nime.org/proceedings/2020/nime2020_paper58.pdf} }