Drive-and-Listen: Turning driving scenery into ambient music - Tokyo

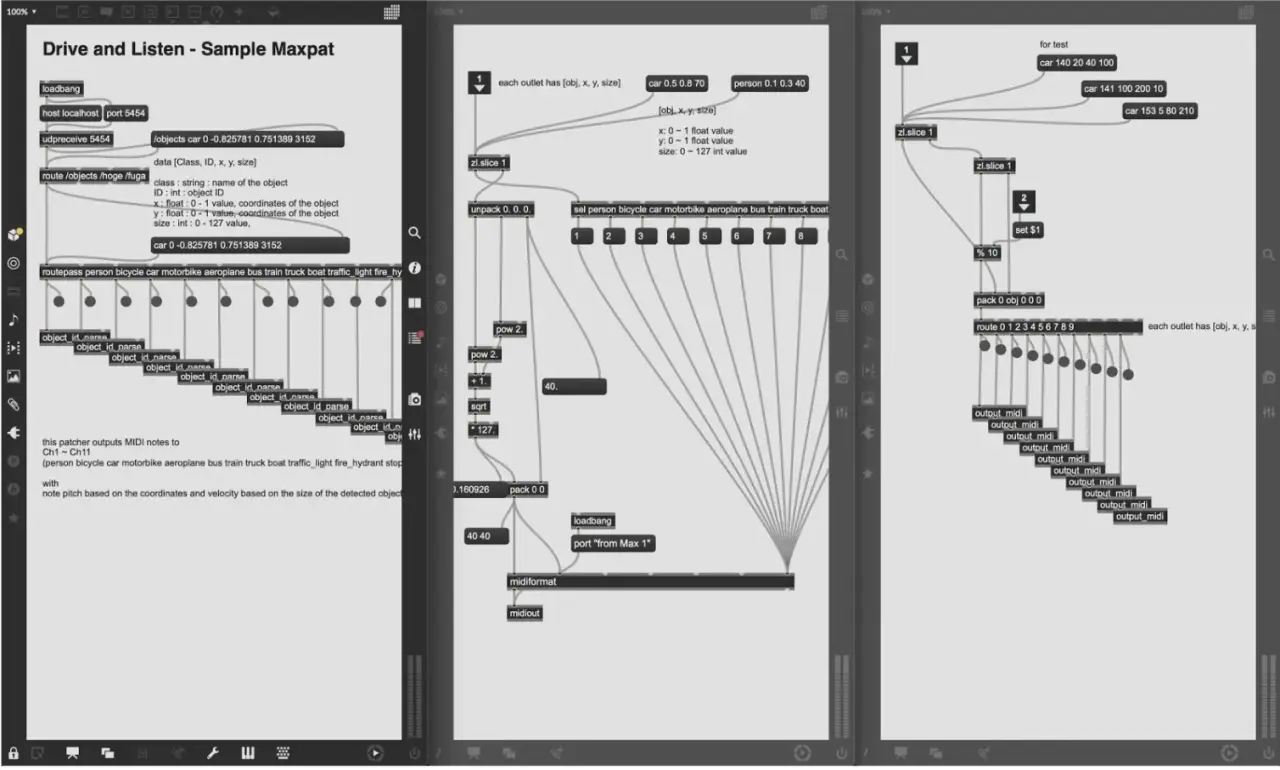

We developed an inference environment for object recognition models using YOLO (v2, v3) (Darknet) and unique OSC sequencer for playing in CPU environment.

Then sort (non-maximum suppresion) each unique object recognized to convert into sounds. The player is implemented with MIDI connection between Max and Ableton Live.

Credits

- Atsuya Kobayashi

- Takumi Inoue

- Keisuke Okazaki

- Yoriaki Hirota