How to Handle Music AI — Deep Music Generation Models and Interface Research (2021)

This article is a repost from Medium.

Introduction

AI-powered music generation and melody generation technology has advanced to the point where it can output highly sophisticated results. OpenAI's JukeBox, announced last year, is a huge Transformer-based model in the waveform domain that uses enormous computational resources to generate waveforms from lyrics. It's quite realistic.

On the other hand, symbolic generation methods that generate MIDI etc. have also become capable of quite sophisticated expression. Methods that can effectively support composition activities have been proposed in several areas, including generation of melodies matching lyrics through conditioning with text information from lyrics using deep learning, and cleanly blending multiple melodies together.

UseCasesforMusic(Melody)GenerationAI

Let me introduce some representative product cases.

MagentaStudio—MagentaTensorflow

Google Magenta is an open-source research project that develops deep learning models such as MelodyRNN, MusicVAE, GrooVAE, and Music Transformer, and provides open-source tools. Magenta Studio is a tool that can be used as a plugin for Ableton Live.

By actually incorporating it as a Max for Live device in Live, you can easily generate multiple MIDI clips and incorporate them into your own production environment.

OrbProducerSuite—ORBCOMPOSER

Orb Composer is a composition support tool using artificial intelligence, characterized by having many parameters that users can control.

Values for controlling melody characteristics include Complexity and Density, and you can control length and rhythm using Bar Length, Octave, Syncopation, Human Touch, Polyphony, etc. Generation of chord progressions and beats can be controlled similarly.

FlowMachines—AIassistedmusicproduction

This is a project from Sony CSL, which also runs AI-assisted music production projects along with AI music production support tools. A Lo-Fi HipHop channel is introduced as a project created using Flow Machines.

Flow Machines primarily offers functionality to suggest melodies, chords, and bass lines matching the style the composer is seeking. In this project, the creator selects those suggested elements using human sensibility and proceeds with production.

ResearchCases

While several tools have already been released as described above, the NIME2020 paper A survey on the uptake of Music AI Software shows that those tools are barely used.

Usage status of various music AI tool packages. Green indicates "have never used" (quoted from the paper)

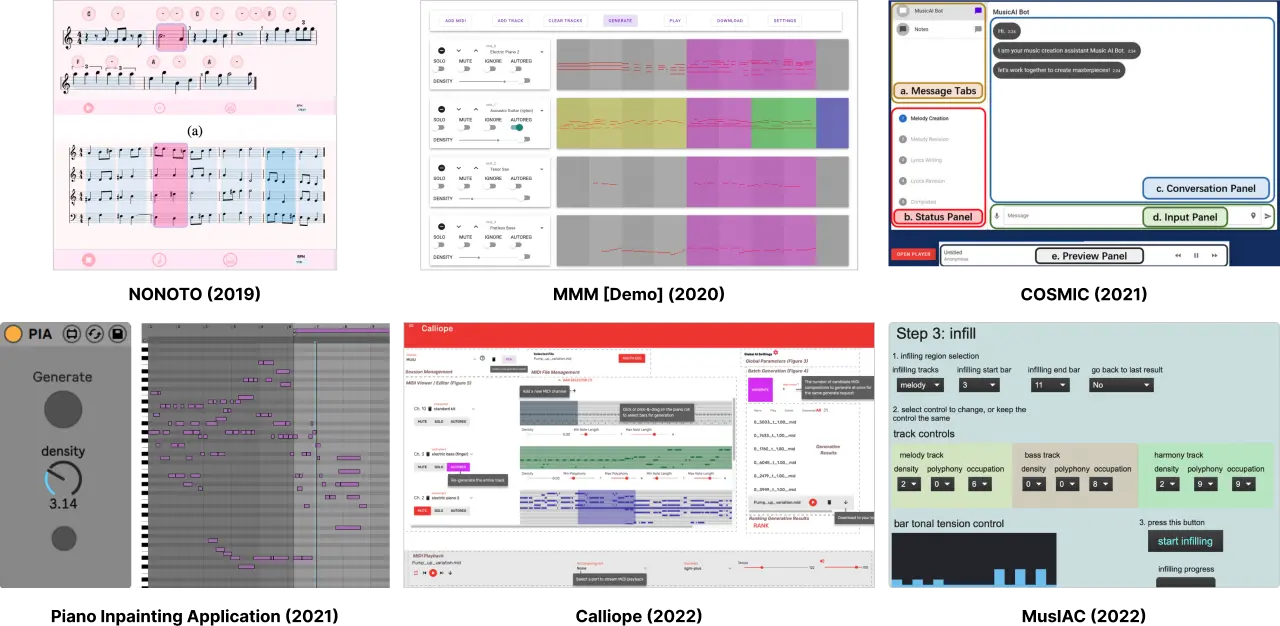

Here, I will introduce the trends in research on interaction with music AI and interfaces for handling them, mainly from 2020 onwards.

WhatKindofInterfaceisEasytoUnderstand?

For example, when thinking about supporting beginners in composition, operating the parameters for controlling models in the tools introduced above might seem difficult. "Introducing AI to support beginners" is a natural motivation, but in that case, what kind of interface would be optimal?

The research Novice-AI Music Co-Creation via AI-Steering Tools for Deep Generative Models on tools for co-creation with artificial intelligence cites the fact that too many melodies are generated and the generation patterns don't stay fixed but move too randomly as one reason why AI tools are not used.

In the system Cococo used in this paper, when using a VAE-based generation model, users can select the part they want AI to generate (voice — soprano, alto, tenor, bass), then control the character of the generated melody with a major/minor slider and a conventional/surprising slider. By providing features such as temporarily replacing generated parts and selecting parts to store your own created melodies, it receives higher evaluations than many existing interfaces.

This interface is also used in the research AI as Social Glue: Uncovering the Roles of Deep Generative AI during Social Music Composition on the role of AI in human-to-human co-creation. It has been shown that when artificial intelligence is introduced, it plays an icebreaker role in human-to-human co-creation. Musical AI can generate a middle ground or compromise between multiple composers' melody ideas, while simultaneously being able to generate something clearly diverging from both, which is said to have the effect of enabling co-composers to break through situations in discussions and consider a wider range of options.

Furthermore, interaction using natural language dialogue has also been proposed. The advantage of incorporating a chatbot-based interface is that composition can be done simply by humming a melody without needing musical expertise.

In Musical and Conversational Artificial Intelligence, Google Dialogflow is used to have conversations about the melody the user wants to create. When the user is humming a melody, STFT is used for analysis, and MIDI generation is performed using Abstract Melodies (Machine Learning of Jazz Grammars), a melody generation method.

Also, COSMIC: A Conversational Interface for Human-AI Music Co-Creation proposes an interface that generates both melodies and lyrics using conversational sentences as queries, such as "I want to create slow and sad song."

They all utilize huge, modern deep learning models: BERT for natural language expression acquisition (encoding), BUTTER for music generation, and CoCon for conversation responses, which is a GPT-2-based model.

OptimizationforEndUsers=Musicians

As research for personalizing MusicVAE's latent space from Google Magenta for individuals, there is MidiME, which trains a smaller VAE that reconstructs the latent space. There is research that applies such an approach.

Generative Melody Composition with Human-in-the-Loop Bayesian Optimization proposes an interface for considering individual users' different musical preferences even with a single model.

By using Bayesian optimization to efficiently select samples for predicting the VAE latent space, it becomes possible to converge generation patterns toward the user's preferences with fewer trials (= optimizing the input to a smaller VAE that reconstructs MusicVAE's latent space).

Also, Interactive Exploration-Exploitation Balancing for Generative Melody Composition proposes one incorporating an interface that takes into account what phase of composition the musician is in, applying this user optimization technique.

In interaction research on Creativity Support Tools (CST), creative activity is said to have two phases: Ideation and Refinement, which can be interpreted as two stages: the process of broadly trying out various ideas, and the process of refining ideas that have taken shape. The Exploration — searching and Exploitation — deepening sliders prepared in this research can control how much optimization toward the user's preferences occurs. In the exploratory phase, you can generate melody patterns you wouldn't normally prefer, and in the phase of deepening preferred melodies, you can pick and choose from gradually changing preferred melodies.

ApplicationtoWorksandPractice

Let's look at what applications to actual works are being seen at this point.

WorksUsingConditionallyControllableModelsSuchasVAE

Claire Evans of the American band YACHT (Young Americans Challenging High Technology) has also given a talk at Google I/O in '19 and has released the album Chain Tripping utilizing MusicVAE.

DesigningInteractionwithMusic

In addition to supporting composition, there is much that can become possible by using artificial intelligence to automatically generate melodies and beats.

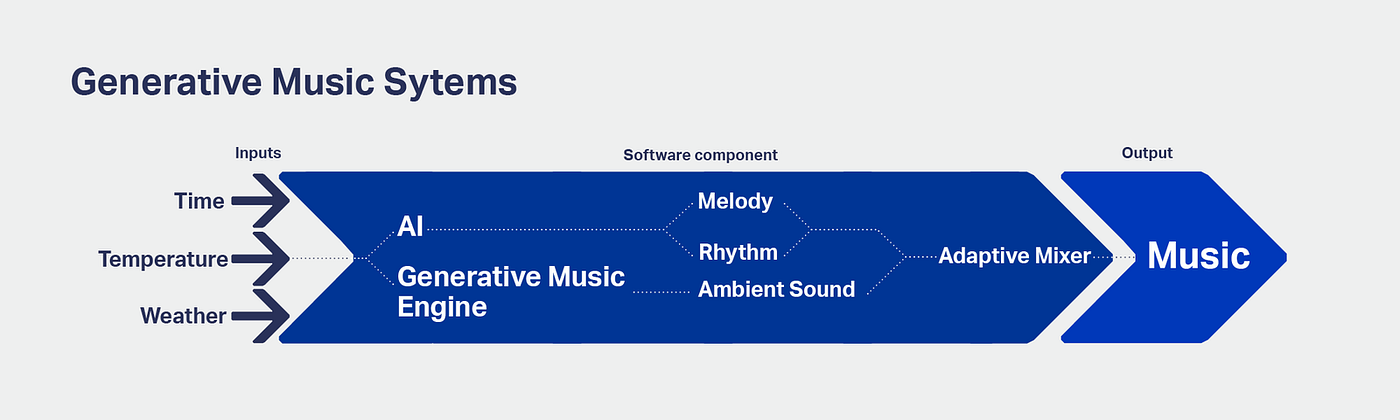

In the music generation system developed by Qosmo that automatically generates BGM for Shiseido's flagship store in Ginza, melodies and rhythms generated by artificial intelligence are mixed with algorithmically generated sounds using rules. By monitoring the time of day, weather, and temperature as input information, dynamically changing BGM that adapts to the environment is created.

Image quoted from the Qosmo site

As a practice in the design of musical experiences using symbolic melody generation models like those introduced above, I myself have also been working on an attempt to continuously generate melodies from interactions with space in a MR (Mixed Reality) environment and automatically generate BGM unique to the place where the experience is happening.

Making music from sounds generated by objects floating in MR space

By treating sounds generated from objects as melodies, automatic melody generation using a trained MusicVAE model's encoder/decoder is performed continuously.

Conclusion

The reality is that there are many challenges before music AI tools for composition support are widely utilized in actual production settings. I feel it is important to survey what musicians — the users — seek from composition activities and composition experiences, and to design music generation functions and interaction with AI that match those needs.

On the other hand, music AI can be utilized not only for composition support but also for designing new ways of engaging with music, such as "listening to and creating new music that is generated in real time one after another," and for designing interaction with the music that is flowing.

Myself and the CCLab music team continue to create works and systems while thinking about what kind of interesting experiences can be realized.