Trends and Practices in Music and Human-Computer Interaction Research (2020)

This article is a repost from Medium.

MusicandHuman-ComputerInteractionResearch

Human-Computer Interaction (HCI) is a field that studies the overall interaction between humans and information technology, from software and I/O devices to interfaces, visualization, audification, and materials. Within HCI, there is a domain called Music and HCI that deals specifically with music.

In Simon Holland's book Music and HCI from the Open University Music Computing Lab, the research domain is described as:

"Music Interaction encompasses the design, refinement, evaluation, analysis and use of interactive systems that involve computer technology for any kind of musical activity"

This shows that the subjects, motivations, and approaches of the research are quite diverse. Additionally, the research report by Takekawa et al. [1] summarizes music interaction research from three perspectives: composition/arrangement support, instrument creation support, and learning support. In addition to these, as AI technology has advanced and AR (Augmented Reality) and VR (Virtual Reality) environments have spread and developed, it is also true that research applying these to new domains of works, Experience Design (XD), and music generation is increasing.

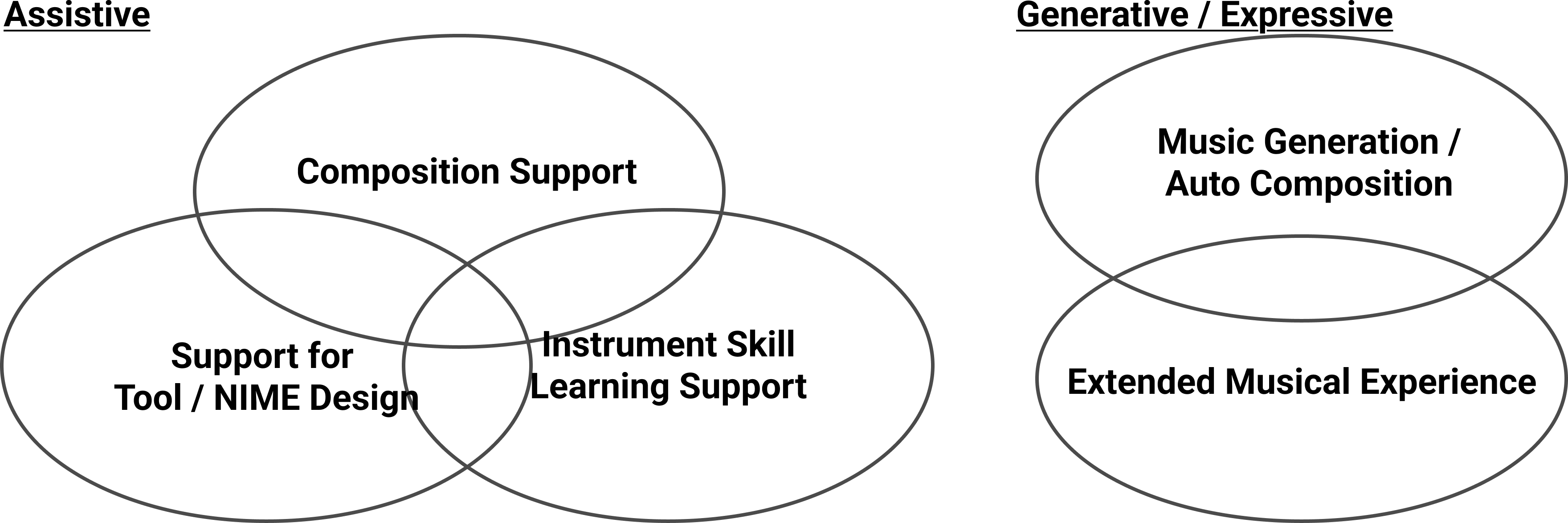

Therefore, I have classified approaches in music and HCI research cases into the following five categories:

Diverse Approaches in Music and HCI Research

While these classifications cannot be completely separated, this article introduces several research cases from these perspectives and also describes the content of my own graduation research.

I.InstrumentCreationSupport(MusicProductionEnvironment)

Many new digital musical instruments (DMI — Digital Musical Instruments) are presented at international conferences such as NIME (New Interfaces for Musical Expression) and the IPSJ Music and Computer Study Group (SIGMUS). As platforms for creating these electronic instruments, many programming environments and libraries for composition have been developed, including OpenMusic (developed by IRCAM since the 1990s), Max, PureData, TidalCycles, FAUST, Chuck, and Mimium (developed by Matsuurakan at Kyushu University). For hardware instrument prototyping, Arduino and Gainer are often used. For communication between hardware and software, MIDI and Open Sound Control (OSC) are used, and communication libraries such as Libmapper have also been developed. Research and development on new communication protocols for computer music also exists, such as RMCP (Remote Music Control Protocol) and MIDI 2.0, which was updated for the first time in 38 years.

II.Composition/ArrangementSupport(SoftwareSupport)

The development and research of systems that support human composition and arrangement by providing environments where computers perform composition using mathematics or external factors has been progressing.

Orpheus (2006) from the Sagayama Laboratory at the University of Tokyo is an automatic composition system capable of automatically composing music to match lyrics entered by the user. After morphological analysis of the input language, prosody estimation is performed and notes are assigned. Typical rhythm patterns are defined as "standard rhythms" and assigned to generate the music.

Hyperscore (2012) by the Opera of the Future at MIT Media Lab allows users to compose by drawing with a pen tool on a user interface where the vertical axis represents pitch and the horizontal axis represents time, and the pen color can change the timbre. This realizes intuitive interaction in composition, similar to the interface of Xenakis's computer UPIC.

FlowMachines: AI Assisted Music, which Sony CSL has been researching and developing since 2014, is a research and social implementation project aimed at extending creator creativity in music, producing many products.

III.LearningSupport

Today, video game-based piano and guitar learning (such as Rocksmith) has become widespread, and electronic instruments come with built-in tutorial features (V-Drums, for example, include a training function). Piano Tutor (1990) [4], developed at Carnegie Mellon University, is a pioneering study in AI-powered performance learning support. It enables automatic page turning through performance tracking recognition, presentation of model performances via video and audio, and analysis of learner performance data with guidance for improvement — which is impressive.

More recently, systems using finger recognition technology to project information directly onto piano keys for practice support (2011) [3] and drum practice support systems (2015) [4] that extract 31 feature parameters from the timing deviation and striking intensity of recorded drum performances to estimate performance proficiency and provide feedback have been published. Also, Strummer [5], a study from the Yatani Laboratory at the University of Tokyo (iis-lab), uses music data from 727 songs with chord labels and recognizes whether users are playing the correct chords through acoustic signal analysis to provide feedback. Five guitar beginners each practiced guitar for 5 hours before evaluation.

IV.MusicGeneration

The first computer composition (score generation) was the "Illiac Suite for String Quartet" by ILLIAC in 1957, consisting of a module that generates pitch sequences based on Markov chains and random number generation, and a module that evaluates the generated results using rules based on counterpoint and harmony.

As computers became more widespread, they came to be used extensively for a compositional technique called algorithmic composition, where algorithms or certain procedures determine compositions in deterministic or probabilistic ways.

Since then, research on generating melodies, rhythm patterns, and chord progressions using statistical models has increased, and in recent years many neural network-based music generation methods have been proposed. Approaches exist for both generating MIDI sequences, scores, etc. (symbolic domain) and directly generating waveforms (audio domain).

Google Magenta has already released Magenta Studio, software capable of generating melodies (MIDI) as a plugin, and many music-generation websites where you can listen to AI-generated music, as well as composition services like Boomy, have appeared starting with OpenAI's Jukebox.

V.MusicalExperienceExtensionandCreation

Research and development of interfaces and platforms aimed at multi-sensory enhancement of musical performance and listening experiences and the creation of new experiences is progressing.

A famous example of a new musical interface is Miburi, a new instrument released by Yamaha in 1995, consisting of wearables incorporating sensors and speakers. By attaching sensors to the performer's fingers, wrists, elbows, shoulders, and feet, performance based on the performer's body movements and steps becomes possible, producing many performers.

Also, Otamatone by Maywa Denki (2009) is another recently popular new musical interface. The Otamatone, an instrument capable of producing sounds as if a person is singing, has various playing techniques such as push and portamento playing, and has many users.

On the other hand, there is also research taking an approach to extend existing instruments. Cyber Shakuhachi (1997) is an example of a system that improves the performance experience without compromising the original instrument's feel and musical perspective. It was designed to minimize reduction of the shakuhachi's inherent musical qualities and to avoid giving the performer as much sense of discomfort as possible.

An example of research with dynamic differences from the original instrument's sound output is MIGSI (Minimally Invasive Gesture Sensing Interface) (2018), a trumpet capable of real-time sound effects and visual art control by capturing gesture data such as valve displacement and instrument position. The distinctive feature of MIGSI is that it not only serves as an experimental prototype but has also produced rich user interfaces and musical compositions.

MIGSI Interface (Quoted from https://cycling74.com/articles/an-interview-with-sarah-belle-reid)

MIGSI Interface (Quoted from https://cycling74.com/articles/an-interview-with-sarah-belle-reid)

Other examples include music recommendation system research as an extension of music experiences using MIR (Music Information Retrieval). Research includes recommendation by collaborative filtering, similar music recommendation by feature extraction from songs (Bag-of-features, etc.), context-aware recommendation considering the user's situation, and research on searching for songs from emotional language expressions.

There are also many examples of using musical interfaces for interactive performances. One of the classical examples is Brain Opera (1996), a performance by MIT Media Lab. Participants compose music using various electronic music devices, and these compositions are combined into one work. Various new instrument devices were developed, including the Harmonic Driving joystick, Melody Easel, Sensor Chair, and Rhythm Tree.

Additionally, VR-based musical experiences have already been applied to media works such as music videos.

Conclusion

Above, I have introduced case studies from various perspectives in music and human-computer interaction research. While this domain will continue to develop further, there are still new approaches beyond the five perspectives I introduced, as well as composite domains that have not yet been addressed much.

References

[1] 音楽とヒューマン・コンピュータ・インタラクション 竹川佳成(公立はこだて未来大学)— 526 情報処理 Vol.57 №6 June 2016

[2] Dannenberg, Roger & Sanchez, Marta & Joseph, Annabelle & Capell, Peter & Joseph, Robert & Saul, Ronald. (1990). A computer‐based multi‐media tutor for beginning piano students. Journal of New Music Research. 19. 155–173.

[3] 佳成竹川, 努寺田, 昌彦塚本. 運指認識技術を活用したピアノ演奏学習支援システムの構築. 情報処理学会論文誌, Vol. 52, №2, pp. 917–927, feb 2011.

[4] 希子安井, and 雅展三浦. 2015. "ドラム基礎演奏の練習支援システム(システム論文特集号)." 日本音響学会誌 71 (11): 601–4.

[5] S Ariga, M Goto, and K Yatani. Strummer: An interactive guitar chord practice system. In 2017 IEEE International Conference on Multimedia and Expo (ICME), pp. 1057–1062, July 2017.

[6] 吉井和佳, 2. 音楽と統計的記号処理, 映像情報メディア学会誌, 2017, 71 巻, 7 号, p. 457–461.